Csat Comprehensive Guide

This customer satisfaction (Csat) blog is a comprehensive guide for defining, measuring, tracking, benchmarking, and improving customer satisfaction to deliver great call center customer service. The Csat guide was developed based on SQM Group's over 25 years of measuring, benchmarking, and improving customer satisfaction with leading North American call centers. This call center Csat guide will answer the following five customer satisfaction questions.

Discover the following:

- What is Csat

- Why Measure Csat

- How to Measure Csat

- How to Improve Csat

- How to Measure Csat Using Software?

Figure 1: Call Center Csat Comprehensive Guide Questions

1. What is Customer Satisfaction?

Call Center Csat Definition

The customer satisfaction metric quantifies how satisfied customers are with their call center experience resolving an inquiry or problem. The call center Csat metric is viewed as a key performance indicator (KPI) and is one of the most used metrics for measuring and managing customer service.

Moreover, the Csat metric measures satisfaction with the call center's overall customer service, and the agent, as well as important moments of truth such as finding the phone number, reaching the right agent, greeting the customer, understanding the reason for the call, helping the customer, caring about the customer, resolving the call, and after-call work.

The Csat metric provides call center employees with the voice of the customer (VoC) feedback on how satisfied they are with the service they experienced and provides insights for improving people, processes, policies, and technologies needed to deliver great customer service.

VoC is a term that describes a customer's feedback to understand their expectations and satisfaction doing business with an organization and to identify service delivery improvement opportunities. The VoC practice lets the customer be the judge of their experiences.

VoC uses listening posts (e.g., phone surveys, email surveys, call monitoring, customer relationship management, social media, and employees) to understand customer needs, wants, expectations, experiences, satisfaction, and service improvement opportunities.

Furthermore, the Csat metric is a driver for customers wanting to refer an organization to others, willingness to purchase more products and services from the organization, and continuing to do business with an organization.

2. Why Measure Customer Satisfaction?

There is a strong business case for a call center to strive to improve or deliver great customer service. However, accurately understanding that a call center has improved or provided great customer service requires measuring customer satisfaction using a post-call survey method.

Determining customer service can be challenging without measuring customer satisfaction. After all, letting the customer judge the customer service they received is the most accurate way to assess Csat. Put simply, let the customer judge the customer service a call center delivered. It sounds simple to let the customer be the judge for service and use the feedback to improve; however, it is difficult for many call center managers to let the customer be the judge.

SQM's customer experience (CX) research data reveals that 92% of contact center executives agree that improving CX is essential. However, given management's struggle to accurately measure and deliver excellent CX, we thought sharing SQM's knowledge about the differences between Outside-In and Inside-Out CX operating practices would be helpful.

CX operating practices can be an Outside-In or an Inside-Out approach. The difference is the Outside-In CX operating practices are based on the customer's perspective. Conversely, the Inside-Out CX operating practices are based on the organization's perspective. Most organizations are between the Outside-In and Inside-Out CX operating practices and skewed towards Inside-Out.

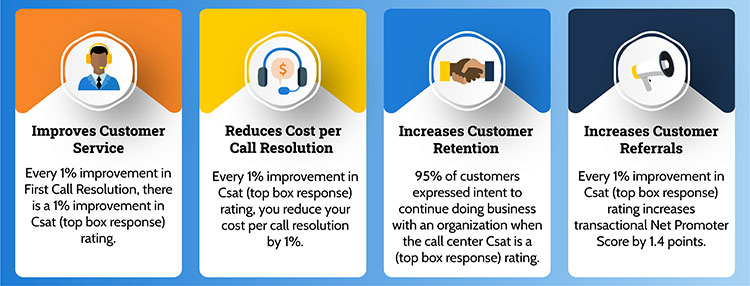

SQM views the Outside-In CX operating practice as more conducive to improving CX. This is because the foundation of Outside-In CX operating practice is to measure Csat and use customer feedback to improve customer service. Based on SQM's CX research, figure 2 shows the call center business case for measuring and improving Csat.

Figure 2: Call Center Business Case for Measuring and Improving Csat

3. How to Measure Customer Satisfaction?

As the old saying goes, you can't improve what you don't measure, and you can't measure what you can't define. There are many call center Csat questions and scales to measure Csat. At SQM, we use the following two questions to measure, track, benchmark, and help clients improve call center and agent Csat.

Call Center Csat:

Based on your last call to XYZ Company, overall how satisfied are you with their call center? Would you say you are… *

- Very Satisfied

- Satisfied

- Neutral

- Dissatisfied

- Or, Very Dissatisfied

Agent Csat:

Overall, how satisfied are you with the customer representative who handled the call? Would you say you are… *

- Very Satisfied

- Satisfied

- Neutral

- Dissatisfied

- Or, Very Dissatisfied

* For the Csat questions, most SQM clients use a 4 or 5 points Csat labeled survey scale. However, your Csat survey scale can be 1 – 4, 1 – 5, 1 – 7, or 1 – 10, and there's no universal agreement on which Csat scale method is best to use.

Two different types of surveys were used to measure and improve Csat: Transactional survey and Relationship survey.

Transactional surveys are triggered (within one day) after using a call center. They provide insights for customer satisfaction with a call center. Furthermore, they generate tactical information for making call center CX improvements. Post-call transactional surveys conducted within one day using phone, IVR, and email survey methods are the best approaches for measuring call center Csat. Put differently, the closer a post-call survey is conducted, the better.

Relationship surveys are sent at regular and predefined intervals. They provide insights into customer satisfaction with the overall relationship and brand. Moreover, they generate strategic information to decide which business areas need to improve CX.

Voice of the Customer Listening Posts

When the call center's mission is to deliver great customer service, the voice of the customer listening posts is a must. Call centers that provide great customer service have one thing in common; they use VoC listening posts to measure and improve customer satisfaction.

High-performing Csat call centers understand the best way to provide outstanding customer service is to tap into VoC listening posts. Furthermore, they know if you are willing to listen, customers will tell you just about everything you need to know to improve your Csat. Most importantly, high-performing Csat call center leaders action customer feedback opportunities to improve Csat.

A listening post for a call center VoC program or customer service management software captures and analyzes customers' expectations and experiences using a call center. Many managers assume they understand the call center customer service they deliver. Listening posts, such as post-call phone or email surveys, are great VoC measurement methods to confirm or dispel their assumptions by letting customers judge their call center experiences.

Listening posts provide customer feedback for insights into customer satisfaction, first call resolution, customer retention index, net promoter score, and areas to improve customer service. Post-call phone, interactive voice response (IVR), and email surveys are the best VoC listening posts to measure, benchmark, track, and improve a call center's customer satisfaction.

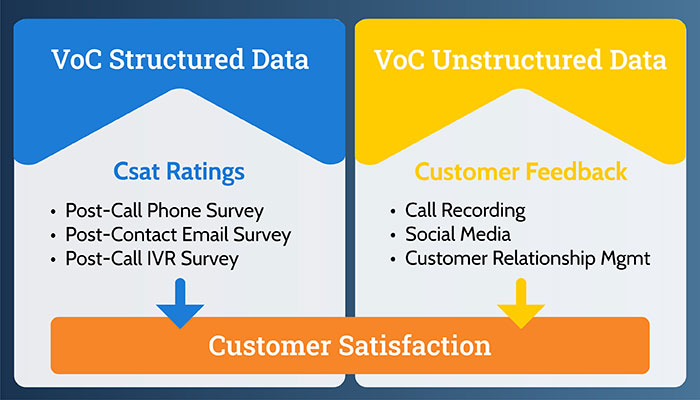

It is helpful to use VoC structured listening posts like post-call phone, IVR, or email Csat surveys for Csat ratings, customer feedback, and VoC unstructured listening posts such as recorded calls, social media, and customer relationship management for customer feedback. Many studies indicate that 80 to 90% of the information a business handles is unstructured (e.g., primarily text). Figure 3 shows the common practices for measuring and improving a call center's customer satisfaction score.

Figure 3: VoC Structured and VoC Unstructured Data

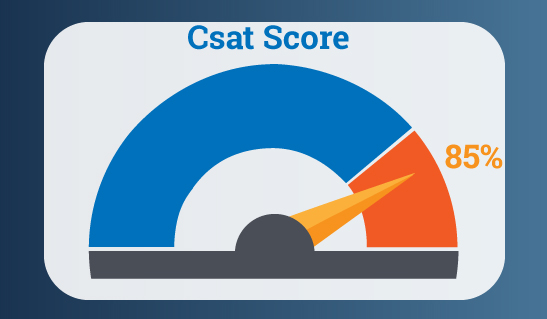

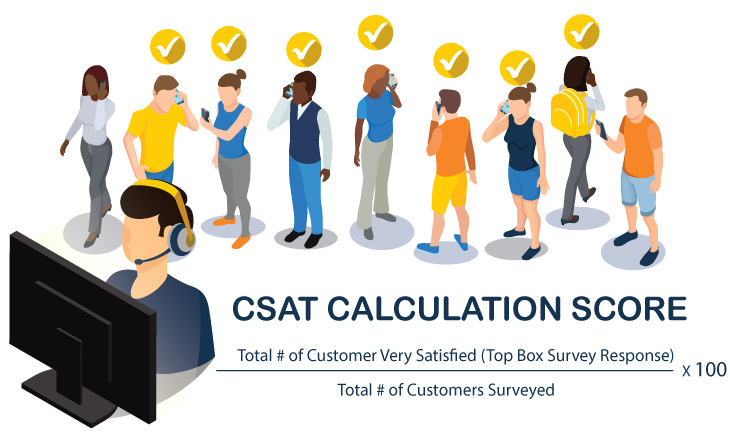

How to Calculate Csat Score?

Csat score measures customer satisfaction with the call center or agent service a customer experienced. Csat score can be calculated for different periods (e.g., past 30 or 90 days and year). In addition, it is common to use a three-month rolling average to increase the survey sample size to improve the confidence level.

Csat score is based on a post-call phone or email survey conducted within 1 business day of their interaction. The Csat score scales can vary, but the most common is a five-point customer satisfaction scale (1= Very Satisfied and 5 = Very Dissatisfied). For example, an agent’s Csat score calculation is the total # of very satisfied customers (top box survey response) divided by the total # of customers surveyed. The very satisfied top box survey response to determine the Csat score is considered a best practice because any other survey response represents an opportunity to improve Csat.

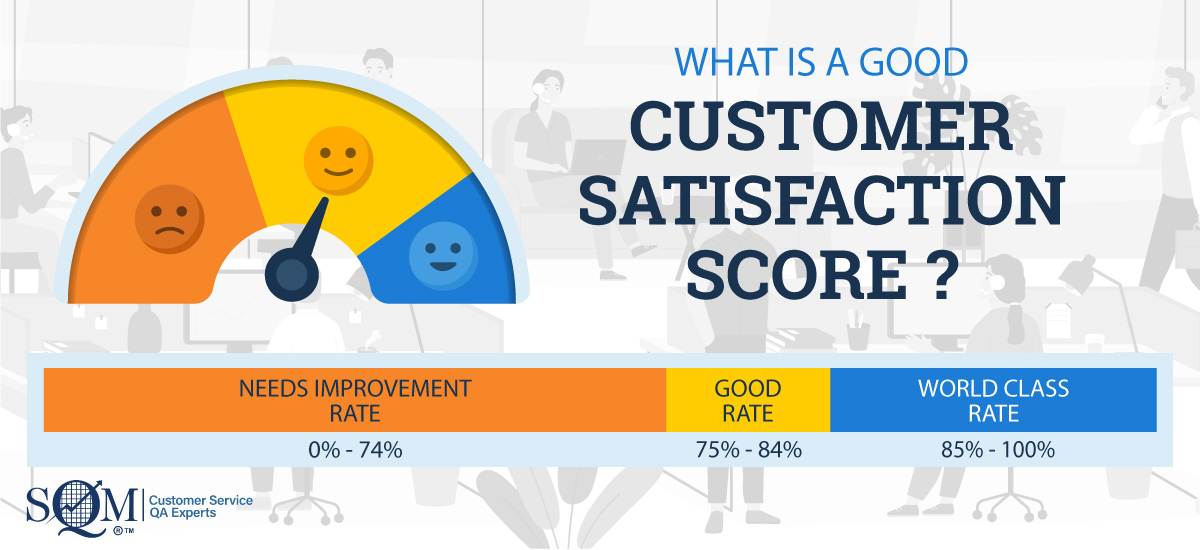

What is a Good Benchmark for Csat Score?

SQM's Csat research shows the Call Center Industry average Csat (top box response) benchmark score is 78%. This means that 78% of customers are very satisfied with the call center's customer service. The call center industry standard for a good Csat score is 75% to 84%. The World-class Csat score is 85% or higher, and only 5% of call centers can achieve a World-class Csat score.

Benchmarking Customer Satisfaction

Call center benchmarking for Csat is a one-time study that takes 2-3 weeks to complete. The customer survey takes approximately 5 minutes to complete. Customer surveys are conducted using a post-call survey method within 1 day of the call center interaction. SQM benchmarks a call center's Csat delivery against the performance of over 500 leading North American call centers.

We use a standardized approach for measuring Csat with all call center benchmarking participants. Our standardized call center Csat measurement practice is considered the gold standard for measuring and benchmarking Csat consistently and accurately.

Understanding how your call center Csat compares to LoBs within an organization, other call centers, and world-call centers provides tremendous insights into a call center's strengths that you want to celebrate and build upon and weaknesses that you need to improve. Put differently, Csat benchmarking using a standardized Csat measurement method allows a call center to benchmark its Csat accurately and gain valuable CX insights.

It is essential to mention that customers using your call center are not only comparing you to your direct competitors. But instead, in many cases, they compare you to the best customer service they have had with their favorite companies from any industry.

Tracking Customer Satisfaction

Call center tracking for Csat is an ongoing study conducting surveys daily or weekly. Similar to benchmarking Csat, the customer survey takes approximately 5 minutes to complete for tracking Csat. Customer surveys are conducted using a post-call survey method within 1 day of the call center interaction.

Surveys can be conducted using any of our survey options (e.g., phone, IVR, or email). In addition, customer feedback notification (e.g., world-class calls, and customer dissatisfaction) is sent to you in real-time and can be accessed through a mobile, tablet, or desktop device.

SQM's tracking study also qualifies agents and supervisors to become eligible to be certified as world-class customer experience providers. Recognizing agents and supervisors for high Csat is one of the best practices for motivating them to improve Csat and sustain great customer service.

4. How to Improve Customer Satisfaction?

The most crucial aspect of any Csat or customer service management (CSM) program is to action the customer survey feedback. Unfortunately, SQM's experience of actioning customer survey feedback tends to be the weakest aspect of a Csat or CSM program. The primary reasons for not actioning customer survey data are competing projects and limited people, processes, technology resources, and limited Csat project management skills to improve Csat.

Call centers spend too much time analyzing their data. Analyzing the data is vital to an effective Csat program; although, actioning the customer survey feedback can be hindered if most resources are used to analyze data. Some call centers are more comfortable just analyzing data, and they are what SQM calls 'analysis paralysis' call centers. Another prominent reason call centers struggle with actioning customer survey feedback is that they do not know how to use the Csat data to improve CX.

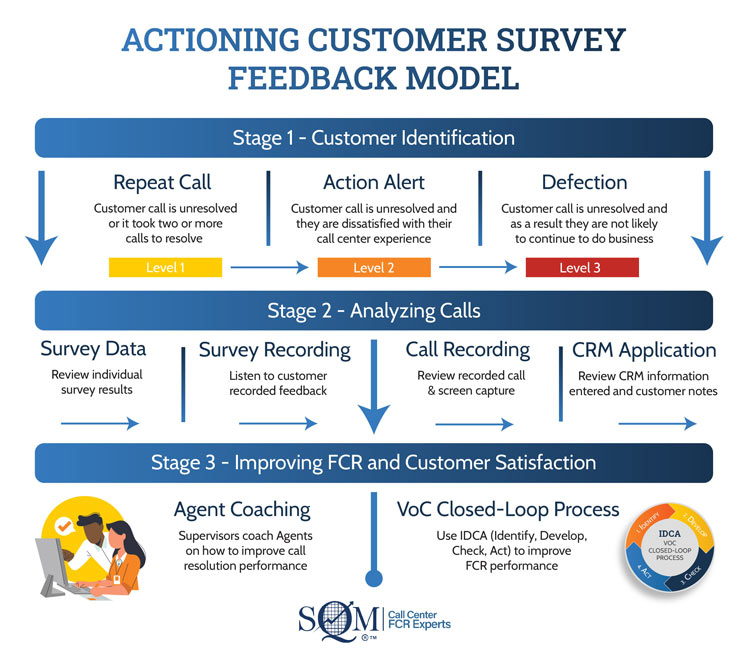

Figure 4 shows best practices for actioning customer survey feedback that SQM uses to counsel clients on how to improve FCR and Csat performance. The following Actioning Customer Survey Feedback Model shows the three distinct stages for actioning customer survey feedback (i.e., 1. Customer Identification, 2. Analyzing Calls, and 3. Improving FCR and Csat).

Figure 4: Actioning Customer Survey Feedback Model

Stage 1 - Customer Identification

The first stage is customer identification for the customer's level of dissatisfaction with their experience in calling the call center. There are three levels of customer dissatisfaction, with 'Level 1' having the lowest level of customer dissatisfaction and 'Level 3' having the highest level of customer dissatisfaction.

Level 1: Repeat Call

The customer's call is unresolved, or it took two or more calls to resolve. This customer is likely to be, at best, only somewhat satisfied with their call center experience as a result of their call being unresolved or taking multiple calls to resolve.

For the average call center, 12% of customers SQM surveyed had their call unresolved. Therefore, a repeat call represents an excellent opportunity to understand what happened on the call.

After surveying the customer, the 'Level 1' customer call that is resolved does not require any immediate or further action. However, if the call is unresolved, the supervisor or escalation agent should action this call within one business day of surveying the customer to resolve the customer's issue or problem.

In addition, have an analyst determine who the original agent handled the first call and find out what happened.

Level 2: Action Alert

The customer call is unresolved, and they are dissatisfied with their overall call center experience. For the average call center, 6% of customers SQM surveyed would be classified as an action alert caller.

The 'Level 2' customer call does require action by someone within the organization within one business day of surveying the customer to attempt to resolve their issue or problem.

Many call centers SQM works with have dedicated escalation agents who handle calls where the customer is identified as a complaint caller during the survey process. Using dedicated escalation agents is the best practice for ensuring that customers identified as action alert callers are contacted to resolve their issues or problem.

Level 3: Defection

The customer call is unresolved. They are dissatisfied with their overall call center experience and stated they might defect. For the average call center, 5% of customers SQM surveyed would be classified as potential customers at risk of defection.

The 'Level 3' customer should be contacted by someone within the organization immediately after surveying the customer, or at least within one business day of surveying them, to resolve their issue or problem and achieve customer retention. In many cases, the call center is the last line of defense for retaining customers.

Many call centers SQM works with have dedicated customer retention agents who handle calls in which the customer is identified as a potential defection caller during the survey process. The significant advantage of using dedicated customer retention agents for handling defection customer calls is that, in most cases, they have the service recovery skills and proper authority level to resolve the customer issue or problem and hopefully retain the customer.

Stage 2 - Analyzing Calls

The second stage is to analyze why the customer's call was unresolved. Four different analysis areas are used to determine why the customer's call was unresolved (e.g., survey data, survey recording, call recording, and CRM). Each area provides unique insights into what happened on the call and insights into the opportunities for coaching agents to improve their call resolution performance and improve the call center's people, process, and technology practices to improve FCR and Csat performance.

Survey Data and Recorded Survey Feedback

The 'survey data' and 'recorded survey feedback' steps are when a supervisor or analyst reviews the results of an individual survey to understand why the call was unresolved. When analyzing survey results, it is helpful to review the customer ratings and feedback before listening to the call recording, screen capture, and checking the information in the CRM system.

The general belief is that the analysis person must understand that the customer is always right until proven otherwise. Unfortunately, it is not uncommon for call center personnel to believe and practice that the customer is wrong until proven right. SQM considers this to be poor practice, and it can be very difficult for call center personnel who operate with this belief to improve FCR and Csat.

It is good to read customer comments and then listen to the survey's recording when analyzing customer feedback. The call recording allows the listener to hear the customer's tone, which the text survey feedback does not always capture.

SQM captures the customer feedback, analyzes the input, and then codes the feedback based on tier 1 and tier 2 repeat call reason metrics. The value of this approach is that targeted opportunities for improving Csat and FCR are identified.

Call Recording

This step is based on a customer being surveyed, and their call was recorded. In this step, the call is evaluated using what SQM calls a Customer Quality Assurance (CQA) approach.

CQA combines call compliance metrics, judged by a QA evaluator via a call recording, and service quality metrics, judged by a customer via a post-call or email customer survey. CQA is based on the premise of letting the customer be the judge of their own experience when contacting an organization and is one of the best practices for driving improvements in the FCR and Csat score.

CQA uses VoC data to judge call quality to enhance, not replace, the established call monitoring process. The customer survey information alone cannot replace the entire existing QA process because there are some contact center metrics that the customer simply cannot always judge (e.g., compliance, and the accuracy of information). Thus, it is still necessary for the call center monitoring team to evaluate these metrics or use analytical tools to determine call compliance.

CRM Application

This step is also based on a customer being surveyed to identify which customers should be analyzed using customer relationship management (CRM) software. In this step, the call is evaluated using a CRM system.

SQM's experience using the CRM system is an excellent approach for determining what happened on the call, as long as the agent took good notes.

The CRM approach works well for determining why a call was unresolved because when a call is assessed in conjunction with customer survey feedback and CRM information, there is synergy.

Both assessment techniques focus on the 'outcome' versus the 'journey.' From a customer point of view, 'outcome' means whether or not the call was resolved, and from an organizational point of view, the outcome is the actual call data and notes that are in the CRM system. The CRM can also provide insights into the customer's journey for resolving an inquiry or problem.

Stage 3 - Improving FCR and Csat

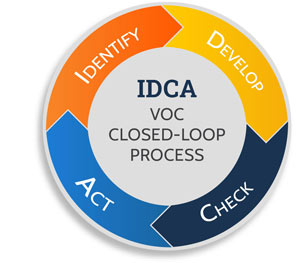

The third stage is improving FCR and Csat. There are two different areas for improving FCR and customer satisfaction; Agent Coaching and the VoC Closed-Loop Improvement Process (i.e., IDCA – Identify, Develop, Check and Act). Both areas are designed to use customer survey feedback as the foundation and starting point for improving FCR and Csat performance.

The best practice for improving FCR and Csat is to always start with customer survey feedback supplemented by call recording and CRM data versus the other way around. Put simply, use the voice of the customer (e.g., post-call survey) feedback as the foundation for any customer service improvement initiative.

Agent Coaching

For agent coaching to be effective, agents must understand their VoC performance and expectations. Using an agent VoC dashboard accessible through their desktop is a best practice to ensure that agents clearly understand what is expected of them and what metric goals they will be held accountable for their performance. In addition, agent desktop VoC data needs to be updated on an hourly or daily basis, and the agents need to have access to VoC data at any time. Finally, it is worth mentioning most call center Csat improvement comes from reducing the agent source of errors.

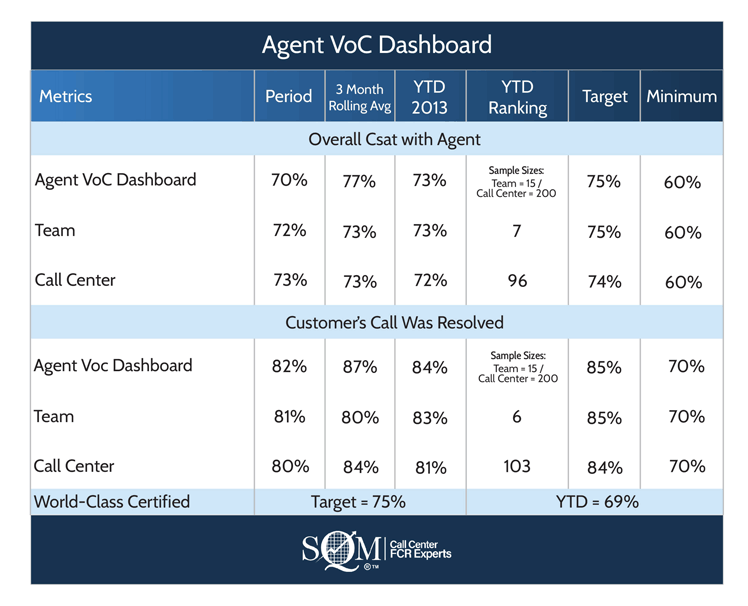

Figure 5 shows an example of an Agent VoC dashboard. It shows explicitly how an agent performs for the current period, three-month rolling average, year-to-date (YTD) performance, YTD ranking comparing them to their peers, the agent target, and the minimum expected performance. However, it also shows only two metrics: overall customer satisfaction with the agent and call resolution performance.

By focusing on just a few VoC metrics (no more than four) and making it easier for agents and supervisors to review their performance, call centers are more likely to see VoC performance improvements.

Figure 5: Agent VoC Dashboard (Example Data)

VoC Closed-Loop Improvement Process

The VoC closed-loop improvement process is a well-accepted ongoing business practice for identifying what areas to improve and for implementing people, process, and technology practices that will improve FCR and Csat performance on an ongoing basis.

The basic premise of the VoC Closed-Loop process is to form a Csat improvement team and use VoC data (e.g., post-call survey and CRM/call recording systems) to identify, analyze, and develop solutions to action for improving customer service.

Call center leaders have invested in VoC programs and software that employ a VoC Closed-Loop process. For example, at SQM, our customer service management software has a VoC Closed-Loop improvement process (see figure 6) that consists of four steps – Identify, Develop, Check, and Act (IDCA) to improve FCR and Csat performance. The four ongoing sequential steps of our VoC Closed-Loop Csat Improvement Process are:

Figure 6: VoC Closed-Loop Csat Improvement Process

1. Identify - repeat call reasons to improve by measuring FCR and Csat performance

2. Develop - a solution and implement a test pilot to reduce repeat call reasons

3. Check - to see if the test pilot was successful by measuring changes in FCR

4. Act - on customer feedback by implementing a standardized improvement plan for reducing repeat call reasons for the entire call center

5. How to Measure Customer Satisfaction Using Software?

Now that you read about the business case for why Csat is so essential, how to measure it, and how to improve customer satisfaction, let's now cover how to measure Csat using customer service management software.

Start learning how to measure customer satisfaction today with our free software demo for customer service management explicitly designed for call centers.

Csat measurement software is often called customer service management (CSM) or customer experience management software (CXM). SQM's CSM software is specifically built for call centers and designed to measure, track, benchmark, and improve Csat, first call resolution, the net promoter score, customer service, quality assurance, and employee experience.

Capturing and measuring Csat using CSM software is only part of the broader picture for delivering great customer service. For example, call center customer satisfaction is complex and is a lagging indicator. As a result, it is essential to measure multiple metrics to get a comprehensive picture of customer service delivered by the call center. For example, FCR and call resolution metrics are leading indicators, and when these metrics are high or low, so is customer satisfaction (e.g., lagging metric). Therefore, effective CSM software needs to capture leading and lagging data to measure and manage customer service effectively.

To keep up with customer expectations, understand performance, and improve FCR and Csat, a call center VoC program needs to employ CSM software. Again, CSM software is an excellent tool for capturing, measuring, benchmarking, and reporting customer service delivery. However, before choosing a CSM software vendor, you need to understand how it fits your call center VoC program. Listed below are a few questions to consider:

- What are your call center objectives? Higher FCR and Csat? Lower cost? Improve transactional NPS? You need to understand your goals and objectives before you start your VoC Program.

- Do your CSM software features need to be designed to specifically measure, coach, and reward agent and supervisor customer service delivery?

- Does your call center need a VoC closed-loop process at the agent level? For example, can agents follow up on customer feedback one-to-one to close the loop?

- What are the customer survey quota requirements? For example, will the quota be at the agent, line of business (LoB), or call center level? What will be the survey methods used (e.g., phone, email, IVR, website pop-up, or a mixture of different methods)?

- Who will be accountable for customer service results (e.g., agent to CEO)?

- Do you want to capture, analyze, and report internal and external data in the same software platform?

- Do you want the CSM software capabilities to include agent coaching, recognition, or soft skills e-learning?

- Do you want your CSM software capabilities to include recognizing agents for their Csat using a debit card for instant gratification?

- What customer insight data is already available, and by what function or department (e.g., research, workforce management, QA)?

- How are existing internal and external data being used? For example, is the data used by agents, supervisors, or leaders, and are the accountability metrics based on FCR or Csat performance targets?

- Where are the gaps in customer service understanding? Consider all contact channels (e.g., phone, email, chat, IVR, website), job levels (e.g., agent to CEO), segments (e.g., LoB, products, services), and call types (e.g., claims, service, sales, billing, technical, complaint).

- How will the information be analyzed? For example, will you use internal and external data for the same calls? Will you use structured data (e.g., Csat rating, QA score) and unstructured information (e.g., open-ended survey questions, call recording text)? Will analysts need to be fully trained in analyzing and reporting structured and unstructured data?

- How will the information be reported? For example, will agents, supervisors, or leaders access dashboard reporting? What VoC data needs to be shared? How often will the VoC data be shared, and who needs to see it? For example, will you share it with customers?

- How will you action the customer feedback? Will you use a VoC closed-loop process (e.g., to go from identifying to actioning people, processes, and technology improvement opportunities)? Do your CSM software requirements need VoC closed-loop capabilities?

mySQM™ FCR and Csat Insights Software

Find out why over 500 leading North American call centers trust mySQM™ Customer Service QA Software as their primary customer service management (CSM) tool to measure and improve first call resolution and customer satisfaction.

Our high-value CSM software is specifically built to help call centers improve their customer service and operating costs. Our CSM software provides FCR and Csat insights by capturing and reporting external (e.g., post-call surveys) and internal (e.g., call data, quality assurance) data, all in one powerful CSM software platform.

Post-call surveys are conducted and captured within one day of customer interaction, sending actionable insights within minutes of a customer survey being completed using the real-time CX notification feature of mySQM™ Customer Service QA Software.

mySQM™ Customer Service QA software is unique because it provides powerful insights based on customer surveys and internal data captured. The data is used for coaching, benchmarking, and rewarding agents to motivate them to improve their customer service. As a result, our client's average ROI is 450%, and the payback period is less than 3 months. Click on the Business Case link to learn more about the benefits of mySQM™ Customer Service QA Software.

Quick Related Links

First Call Resolution Definition First Call Resolution PPT First Call Resolution Benefits First Call Resolution Strategies First Call Resolution Operating Philosophy FCR Case Study Survey Data Calculate First Call Resolution Top 10 Call Center Metrics VoC Closed-Loop Outside-In or Inside-Out Journey Mapping Concierge Service Sample Size Calculator Good to Great Customer Service CSM Software Software Business Case